Kubernetes on AWS by Altostratus without complications

In this post we explain how to abstract the complexity of a container orchestration platform using EKS blueprints, a powerful framework that allows us to deploy and use GitOps techniques and is ready for production in a very short time.

In general we tend to describe technology frameworks as an abstraction layer on top of a programming language; an add-on or construct that includes a set of functionalities, tools and a structural approach. While extending a programming language with additional modules is more or less straightforward by its very nature, creating abstractions consisting of tools and logical entities that are more or less useful in various contexts is rather more complex.

Based on this definition, we present to you EKS blueprints, a framework powered by AWS and maintained by the community around Kubernetes. This framework has been built in two flavors: terraform and CDK and developed to facilitate the creation of a container orchestration platform on top of EKS, under productive conditions and with a wide selection of tools.

What are EKS Blueprints?

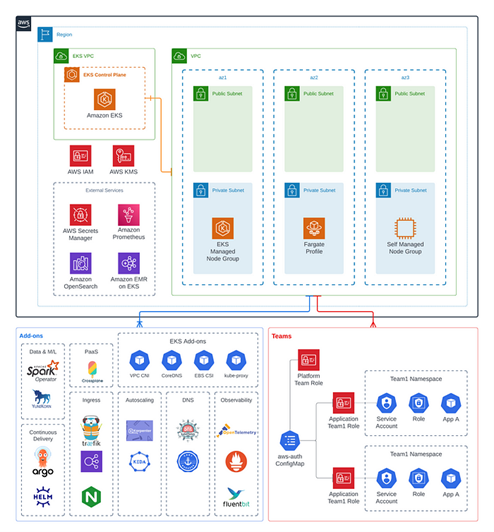

In the case of EKS Blueprints, the additional tools are utilities for deployment management, libraries… mostly part of the CNCF. GitOps as a philosophy to manage and structure the projects, Terraform/CDK as languages to extend it and a series of logical entities to represent the roles of the people operating a container platform based on Kubernetes. According to this definition, each team would work in their area, but writing in a common language (yaml), and in the particular language of their area (terraform or CDK), or the language of their application respectively.

The above architecture diagram depicts an EKS environment that can be configured and deployed with EKS Blueprints. The diagram illustrates an EKS cluster that runs in three Availability Zones, boots with a wide range of Kubernetes plugins, and hosts workloads from several different machines.

Brief analysis of the framework

In the tool documentation there are several examples of incremental complexity, a semantic versioning system and several repositories. For the terraform version, at the time of writing, version 4.29.0 has just been released and there is already speculation about the changes in version 5, which, as indicated in its repository, will be a major breakthrough in terms of dependency management and module structuring. On the other hand, there is also abundant documentation and didactic material in the form of workshops, which we will share at the end of the article.

Pros

The main strength of this tool is the ease of deployment of a production-ready environment. By creating a relatively simple terraform stack, we can define our EKS cluster and in the same context add modules of varied but essential functionality: observability, deployments, file system management and a long etcetera.

This construct allows us to lay the foundations for receive workloads and give autonomy to other teams to operate them under the conditions and limits that we define. The possibilities are enormous and the time savings are remarkable. Additionally, it is linked to use of AWS roles for its operation, which fits very well into the context of cloud governance. Finally, it also has room for more complex structures, such as multitenant clusters.

Contras

As a point of improvement, I think it would help a lot to centralize and improve the organization of code and examples in a GitHub organization, as most of the material is spread across three large organizations and numerous directories and branches, the result of what appears to be organic growth coupled with the use of automated distribution tools.

What reason would lead me to use it?

The main reason to use this tool is that it combines experience with next-generation container orchestration, in addition to being an open source solution. Having this resource, or at least valuing it, makes us productive in a short time and in a sustainable way.

Some processes must be done sometime during learning process. This practice is part of many learning itineraries paths, but in addition, by experimenting for oneself, a better understanding and internalization of the concepts and dependencies is achieved. In this sense, setting up a Kubernetes cluster, configuring and connecting it with tools like argocd, managing permissions, etc., is a good way to achieve this, however, this is not always possible to apply.

As an additional point, it would not be an irreversible decision, since it is possible to operated in the context of containers without this paraphernalia, although in a much less comfortable way.

I want to know more

Below we share a series of resources to learn more about this tool:

- Creating clusters with EKS Blueprints | Amazon Web Services

https://aws.amazon.com/es/blogs/containers/bootstrapping-clusters-with-eks-blueprints/ - Continuous deployment and delivery GitOps with Amazon EKS Blueprints and ArgoCD | Amazon Web…

https://aws.amazon.com/es/blogs/containers/continuous-deployment-and-gitops-delivery-with-amazon-eks-blueprints-and-argocd/ - CDK: Documentation and workshop

– https://aws-quickstart.github.io/cdk-eks-blueprints/

– https://catalog.workshops.aws/eks-blueprints-for-cdk/en-US/010-introduction/1-what-is-eks-blueprints/archdiagram - Repository with examples:

– Plataforma: https://github.com/Cloud-DevOps-Labs/kubernetes-in-aws-the-easy-way

– Carga de trabajo: https://github.com/Cloud-DevOps-Labs/kubernetes-in-aws-workload-gitops-management